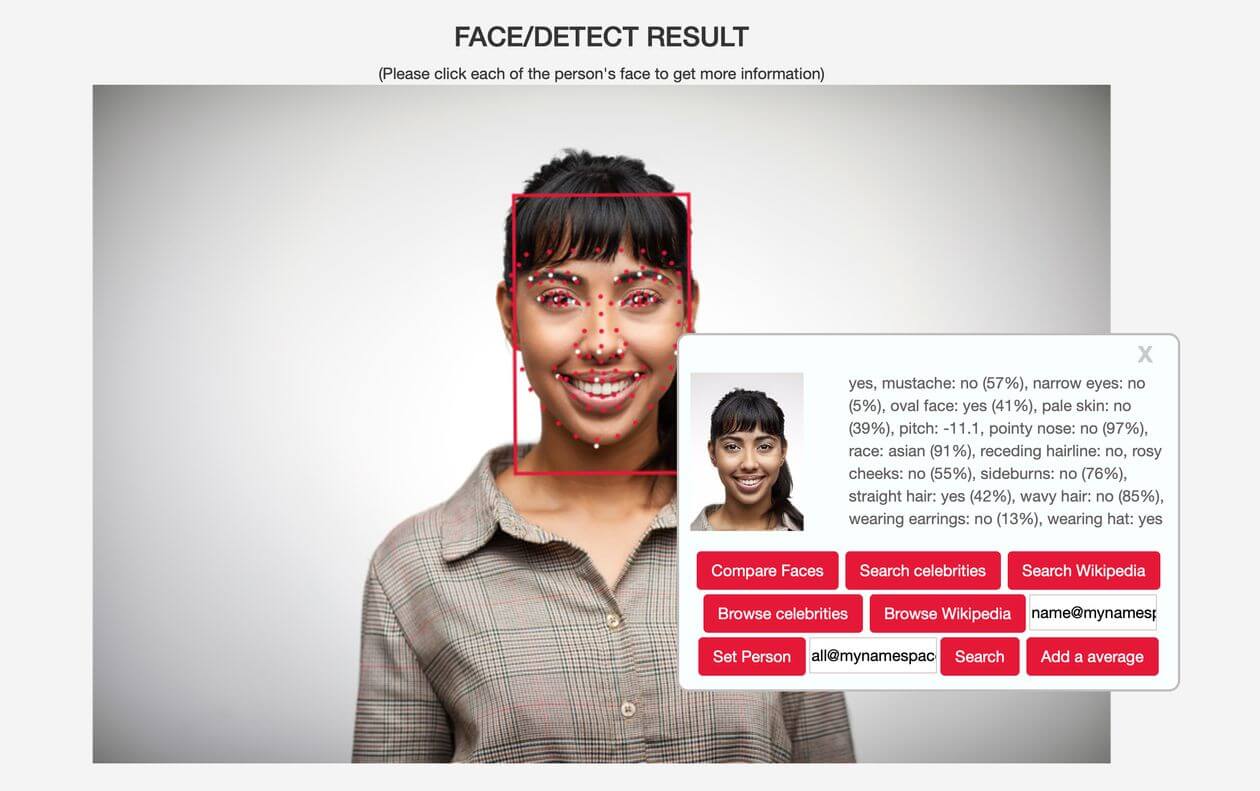

Today, more than a dozen companies offer some form of race or ethnicity detection, according to a review of websites, marketing literature and interviews.

In the last few years, companies have started using such race-detection software to understand how certain customers use their products, who looks at their ads, or what people of different racial groups like. Others use the tool to seek different racial features in stock photography collections, typically for ads, or in security, to help narrow down the search for someone in a database.

…

Some researchers and even vendors say race-based facial analysis should not exist. For decades, governments have barred doctors, banks and employers from using information about race to decide which patients to treat, which borrowers to grant mortgages and which job applicants to hire. Race-detection software poses the disconcerting possibility that institutions could—intentionally or not—make decisions based on a person’s ethnic background, in ways that are harder to detect because they occur as complex or opaque algorithms, according to researchers in the field.

A person of mixed race might disagree with how an algorithm classifies them, says Carly Kind, director of the Ada Lovelace Institute in London, a research group that focuses on applications of AI. “Technological systems have the power to turn things into data, into facts, and seemingly objective conclusions,” she says.