The following is the essence of the controversy:

- IARC has classified glyphosate as “probably carcinogenic to humans.”

- The EPA has classified glyphosate as “not likely to be carcinogenic to humans.”

How is it possible that two organizations had the same data available and came to opposite conclusions?

The process

The process used to determine whether or not a chemical causes cancer is to examine four types of studies:

- Epidemiology (human) studies

- Studies in laboratory animals

- Genotoxicity studies – the damage to genetic material

- Exposure studies – how people are exposed to the chemical

There were fundamental differences between the studies selected for evaluation by the EPA and IARC that contributed to the different conclusions:

EPA: EPA collected data by searching the “literature” and other publicly available sources, from their internal databases and studies submitted to the EPA by industry for pesticide registration purposes.

IARC: IARC limited its data collection to peer-reviewed studies available in the open literature. No industry-submitted studies, not available in the public domain, were included.

But the type of studies selected, including their quality, determine the results.

Limiting the data is never good for the scientific process.

Because of their selection criteria, IARC did not examine the complete database. IARC followed the old mantra that “industry is bad”; therefore, industry studies must be tainted, and that only peer-reviewed published studies can be trusted. But the reality is that studies carried out by industry are just as valuable as studies carried out by academics. Peer-review does not guarantee quality studies – some of the most significant cases of fraud in science were carried out by scientists who published in peer-reviewed journals. I don’t often compliment the EPA, but in this case, they examined all the data they could find and didn’t hide behind the mantras of “industry is bad” and “peer review is good.”

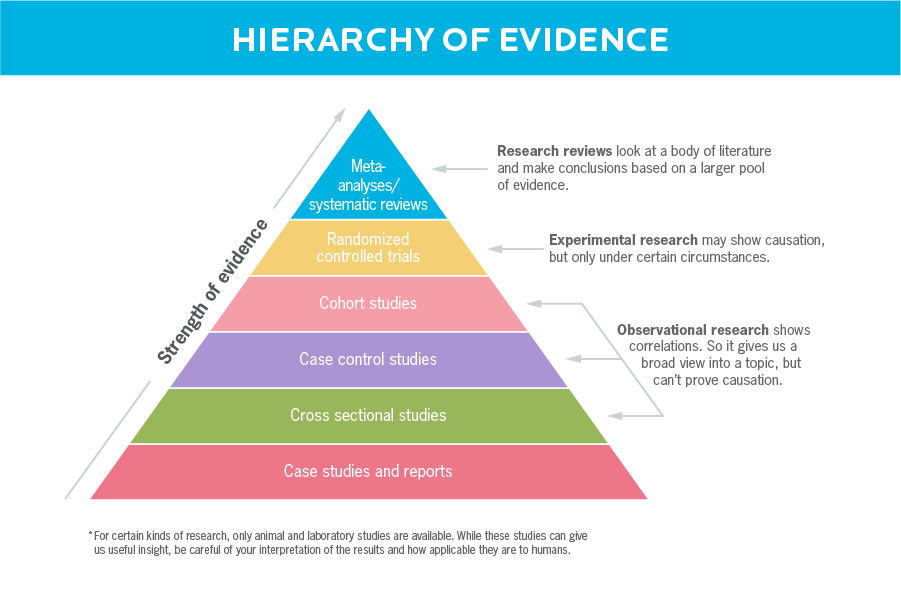

Not all studies are equal

The EPA evaluates the totality of a study using a weight-of-evidence approach. Studies are assessed based on their quality, and higher-quality studies are given more weight in the analysis than lower-quality studies. Quality is assessed based on many factors, including whether: appropriate methodologies and statistical methods were employed; sufficient data and details were provided about how the study was carried out, and exposure measurements were clear and well-defined.

In a weight of evidence approach, if one study involving a large number of people with good exposure measurements of the chemical showed no increase in cancer, and several small studies, without good exposure measures showed an increase in cancer, the larger study would be given more weight in the overall evaluation than the two smaller studies.

IARC, on the other hand, used a “study-by-study” approach in their analysis. Each study was examined separately, and the studies were not weighted against one another. The philosophy underlying their analysis was “one positive study outweighs all the negative studies,” which is in line with the adoption of the “Precautionary Principle” by the European Union. The Precautionary Principle states that uncertainty about possible hazards is a strong reason to ban or limit the use of chemicals or technology.

Additionally, IARC did not follow any established procedure for evaluating the strength or quality of the studies. Although IARC states they evaluate study quality, the procedure they followed was not published – so much for transparency.

Some data specifics

Genotoxicity studies examine damage to an organism’s DNA. Some genotoxicity studies are done in cells and are quick, inexpensive tests that determine whether a chemical causes a mutation in the cell. Other genotoxicity tests are done in laboratory animals and examine damage to the animal’s genetic material. But because a chemical causes damage to DNA does not mean it causes cancer, and conversely, some chemicals that cause cancer do not damage DNA. Therefore, both EPA and IARC use genotoxicity studies as secondary evidence in the evaluation of cancer-causing substances. It is not the definitive factor in the analysis.

As discussed by Dr. Bloom, the EPA measures risk, while IARC measures hazard. The EPA uses studies on exposure to the chemical to determine the likelihood that cancer will occur in the general population (risk). IARC considers whether the chemical could cause cancer under any circumstance, even the very unlikely (hazard).

As every toxicologist knows: The dose makes the poison. Therefore, the results from a study in which workers were exposed to very high levels of a chemical have far less relevance to the general population exposed to very low levels of a chemical.

Overall analysis

Epidemiologic Studies: The EPA considered 58 studies, IARC 26. IARC concluded that there was limited evidence in humans because one study showed a positive association with Non-Hodgkin’s lymphoma. The EPA felt this was “insufficient evidence in humans” because other studies of equal quality contradicted the one study that showed a possible association with Non-Hodgkin’s lymphoma.

Animal Studies: The EPA considered 14 studies, IARC 10. The EPA concluded that there was insufficient evidence in animals because, although positive trends were seen in several studies, the tumor findings were not reproduced in studies of equal quality. IARC concluded that there was sufficient evidence in animals because positive trends were reported in several studies. “Positive trends” does not imply statistical significance; it just means that several data points showed an increase in tumors.

Genetic Toxicity: The EPA considered 104 studies, IARC 1118. The EPA concluded that there was no overall evidence of genotoxicity because the overall weight-of-evidence was negative. The positive results were only seen at high doses, not relevant to human exposures. IARC concluded that there was strong evidence of genotoxicity based on the positive results seen in some studies in rats and mice.

Conclusions

In summary, 1) IARC examined a limited number of studies based on their rejection of studies done by industry unpublished in peer-reviewed journals, 2) They used a study-by study approach where one positive study outweighed many negative studies of equal or better quality, and 3) By focusing on hazard rather than risk they ignored the empirical findings of exposure data. IARC’s process of evaluation is flawed, leaving the impression that they had a predetermined outcome.

The use of a flawed process leads to bad science that never goes away. Particularly in this world of social media and TV pundits, bad science gets repeated over and over again and accepted as gospel. Simultaneously, the objections are ignored or forgotten as the media searches for quick and eye-catching headlines.

Susan Goldhaber, M.P.H., is an environmental toxicologist with over 40 years’ experience working at Federal and State agencies and in the private sector, emphasizing issues concerning chemicals in drinking water, air, and hazardous waste. Her current focus is on translating scientific data into usable information for the public.

A version of this article was originally posted at the American Council on Science and Health and has been reposted here with permission. The ACSH can be found on Twitter @ACSHorg