This is a big deal and a very good thing. While it doesn’t go far enough, it promises to help protect the powerful new tool of “gene editing,” from being smothered in its cradle by the irrational fears that have hobbled traditional “GMOs” over the past three decades. Many of the improvements to plants and animals being done through gene editing could be accomplished with traditional breeding, albeit more slowly, with less precision, and at greater cost. And innovators have been worried they might be stifled as “GMOs” have been. For no legitimate reason, “GMOs” (a meaningless and misleading term) have been discriminated against by governments around the world who have caved in to luddites and special interests pushing fear campaigns favoring obsolete and unsustainable (organic) approaches to agriculture. In spite of this, “GMOs” have still managed to create hundreds of billions of dollars in economic value and deliver enormous benefits to consumers, farmers and ranchers, and the environment. But this past is merely prologue.

Current rapid advances in gene editing have expanded our understanding and appreciation of the living world in a way that beggars anything that came before. There are many developing applications of “gene editing,” of which there are several siblings (zinc finger nucleases, TALENs, meganucleases…) but among whom the tall poppy is something known as CRISPR.

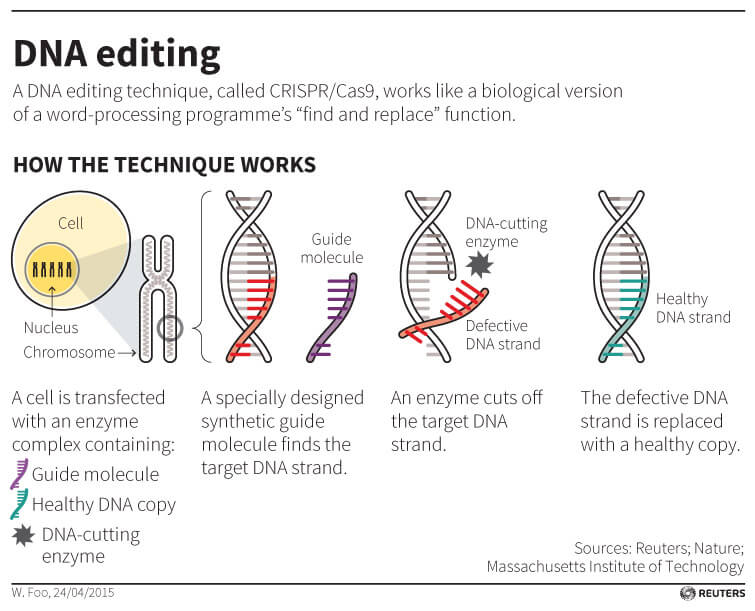

So what is CRISPR? We all know what word processing is: using software developed for the purpose allows us to compose text, and revise it at will by changing one letter in one word at a time, duplicating or deleting text chunks of any size, or by cutting and pasting letters, words, paragraphs or pages from anyplace we find them to any place we want to put them. Substitute “gene” for word in the above paragraph, and “letter” for “nucleotide base pair” and that’s gene editing.

CRISPR is something scientists discovered widely distributed in nature. It is effectively a bacterial immune system, a process that evolved to help bacteria protect themselves against the constant threat from viruses. It allows bacteria to recognize hostile viruses and disable them before they can take over the bacterium, use it to make myriad more viruses, and then discard the bacterium once it’s been used up. Scientists have found such systems throughout nature, and in seeking to understand, and then to harness its capabilities, have given us now the power to copy edit the book of life to solve problems we face.

With these new tools, some important questions loom. What should “the authorities” do to make sure that good stewardship of these developments allows us to reap their benefits without creating undue risks? What kinds of risks do they actually carry, and how might those be managed and mitigated? In other words, how should this stuff be regulated? USDA has signaled their intent not to over-react, breaking the pattern set by too many governments in the past, and many folks are presently grappling with these questions, on which reasonable people have room to disagree.

But note that asking “How should these things be regulated?” leapfrogs the prior question of whether, in fact, these things need to be regulated at all. What, in other words, is the purpose of regulation?

We don’t regulate products to eliminate risk. Zero risk is not an achievable objective. But we can and do regulate to eliminate “unreasonable risk” and manage and mitigate to ensure potential risks do not become unreasonable. Avoiding unreasonable risk has been the explicit objective of US agricultural biotechnology policy since its inception in 1986.

Closer examination of the potential for risk should clarify how it ought to be managed or mitigated. What novel risks, if any, do the products of gene editing present to humans or the environment? If there are no novel risks here, if the kinds of risks (i.e., exposures to a hazard) that will come with these products are familiar to us from our past experience, then the question becomes “Are our means for managing/mitigating these familiar risks adequate to deal with any from these new products?” If the answer is “yes” we’re home free; existing laws are sufficient to enable regulators to prevent unreasonable risks. But if there are novel risks, new hazards and exposure pathways we have not seen before, then exactly what are they? And what level of risk do they entail? In other words, how much regulation do they need if we are to prevent unreasonable risks?

This ground has been plowed before. In the 1980s, an explosion in new capabilities thanks to genetic engineering prompted the same kinds of questions: How should things made with “recombinant DNA techniques” (i.e., “GMOs”) be regulated? Should they be regulated at all? Do they present novel risks? How can we manage any risks to avoid unreasonable risks? Years of workshops, symposia, studies by the National Academies of Science and similar bodies, the former Congressional Office of Technology Assessment, and numerous Congressional hearings laid the groundwork for U.S. policy which was set forth in 1986, known then and today as the “Coordinated Framework.” It was grounded in the global scientific consensus that genetic engineering does not present any novel risks.

Scientists around the world agreed that despite the power of the emerging technologies, anything produced with them would still be defined by its own characteristics. Whatever hazards they might carry are irrelevant unless they lead to exposure, and all the hazards scientists and regulators and the public have imagined are familiar: increased resistance or susceptibility to diseases, pests, environmental constraints, potential changes in toxicity or allergenicity, non-target impacts. All these are familiar and were and remain covered by existing regulatory authorities. Hence, experts in the past agreed that while there might be a need for a traffic cop to disentangle overlapping or competing jurisdictions, there didn’t and don’t appear to be any risks that fall between the cracks, nor any obvious or apparent pathways to unpleasant surprises. And so events have borne out, as the National Academy of Sciences recently reaffirmed for the eleventh time.

So we have a proven model – regulate things according to their own properties, which are the vehicles that convey risks or benefits, and not by the manner in which they were developed, which is opaque and irrelevant to the end user. This contrasts with the process-focused precautionary approach taken by Europe and some other countries. This counterintuitively imposes, in the name of “precaution,” the highest level of scrutiny on products derived from the most modern, precise, and predictable methods. This has stifled agricultural innovation in those countries and led to massive flight of talent and capital to the United States. And it is clear most of those countries desperately want to avoid making the same mistakes with gene editing, for they do not want to be left behind, either in the development or use of these technologies.

What could go wrong? Any tool can be misused by someone with ill intent, and regulation will not stop such misuse as any such misuse is already against the law. The use of this technology in agriculture generally comes down to improvements in plant or animal breeding, which means improved seeds or livestock. If there is ever a risk, those would be the primary vectors. So let us consider some concrete examples. What are the likely products of gene editing closest to the market?

Dairy cattle. Unlike most beef cattle, dairy cattle usually have horns. And since each and every (horned) dairy cow interacts with humans frequently, often several times a day, horns present a non-trivial risk. Consequently, most dairy cattle are dehorned as calves. This is not a pleasant procedure, for either the calf or the human performing it. But researchers at the University of California, Davis, have found a better way. Using gene editing they have shut down the gene in dairy cattle that encodes for the growth of horns. And better yet, this phenotype replicates something that happens naturally, and with which we are already very familiar through other cattle. So it should be no big deal, right?

Sadly, it’s been made into a big deal. Through a rigid interpretation of their legal authority under the federal Food Drug and Cosmetic Act (FDCA) the FDA has decided they want to treat this and other gene edited animals as if the edited gene is a new animal drug, and must be subjected to the years of scrutiny and expense drug approval entails. The foolishness of this has been well described, as well as the policy failure in FDA’s inability to find a way to exercise the discretion Congress clearly intended them to use. But far from responding wisely to such constructive criticism FDA seems intent on moving ahead with a misguided policy that would not improve safety but will definitely crush innovation. The situation is so intolerable the livestock industry has risen in revolt, demanding Congress prohibit FDA from doing so and assign the authority to less hidebound and ideological stewards at USDA.

The story in USDA’s area of responsibility is much happier, even before Secretary Perdue’s recent announcement. Since 2011, USDA has been helping innovators determine if their products need to go through the full regulatory gauntlet or if there might be a quicker way to decide whether or not that’s really justified. Through the “Am I Regulated?” process developers can bring their improved seeds to USDA for an initial screening to determine if there’s sufficient potential for problems to call for a closer look. The process is not perfect, but is better than anything the FDA has come up with, and has established a strong track record of risk-based evaluations that have helped clear the way for innovators to innovate.

Another example of a new product developed through gene editing is the mushroom that resists browning. Researchers at Penn State used gene editing to turn off the gene in mushrooms that produces an enzyme that causes browning. This so-called “null” mutation mimics something that happens frequently in nature, in this case with completely harmless results. It is the exact same “loss of function” mutation that naturally produced golden raisins, or sultanas, that humans have been consuming for many years with no ill effects and without benefit of prior government approval. This mushroom was in fact scrutinized by USDA and found not worthy of further review –not exactly the “escape” from regulation many news items reported, which begs the reminder: the purpose of regulation is to avoid unreasonable risk, not to perpetuate and expand regulations for the sake of regulation.

So where does all this leave us? This is plant and animal breeding, not rocket science. Seeds and animals do not blow up on the launch pad once in every hundred countdowns. Until and unless it can be shown that the use of “gene editing” imparts characteristics to the final product that present novel, unfamiliar, and unreasonable risks, there is no case for regulating them differently than products derived from other methods. Regulatory scrutiny should be confined “to the novel properties of improved organisms.” Time to let a thousand flowers bloom.

Val Giddings is Senior Fellow at the Information Technology and Innovation Foundation. He previously served as vice president for Food & Agriculture of the Biotechnology Industry Organization (BIO) and at the Congressional Office of Technology Assessment and as an expert consultant to the United Nations Environment Programme, the World Bank, USDA, USAID, and companies, organizations and governments around the world. Follow him on twitter @prometheusgreen.

This article was originally published on the Information Technology and Innovation Foundation’s website as “Gene Editing, Government Regulation, and Greening our Future” and has been republished with permission.