The science correspondent at Le Monde extinguished the last embers of the credibility and reputation of French molecular geneticist Gilles-Eric Séralini under the banner “Are GMOs Poisons? The End of the Séralini Affair” (OGM-poisons ? La vraie fin de l’affaire Séralini—Google English translation Hint: “Infox” is new French slang for “Fake News”, a combination of infos—an abbreviation of informations which means news—and intox which means disinformation or hoax.)

Recapping de l’affaire Séralini

To recap briefly for those who might not be aware of the details: In 2012, Séralini, a scientist with a reputation for poorly designed studies purporting to show harms associated with GE crops, published what would become a blockbuster long-term feeding study that seemed to show that GE feed and Roundup residues caused massive tumors in rats. [Read the GLP Profile of Séralini here] The study’s release was accompanied by a bizarre series of public relation stunts. It started with a press embargo, barring reporters from seeking expert opinion before the paper was publicly released. It included the release of a propaganda book by the French molecular geneticist, a film and launches of multiple anti-GMO websites

Writing for Discover, Carl Zimmer, considered the “dean of American science journalism”, characterized the release of the paper:

[O]utside experts quickly pointed out how flimsy it was, especially in its experimental design and its statistics. Scicurious has a good roundup of the problems at Discover’s The Crux.But those outside experts were slow to comment in part because reporters who got to see the paper in advance of the embargo had to sign a confidentiality agreement to get their hands on it. They weren’t allowed to show it to other experts.

…This is a rancid, corrupt way to report about science. It speaks badly for the scientists involved, but we journalists have to grant that it speaks badly to our profession, too. If someone dangles a press conference in your face but won’t let you do your job properly by talking to other scientists, WALK AWAY. If someone hands you confidentiality agreements to sign, so that you will have no choice but to produce a one-sided article, WALK AWAY. Otherwise, you are being played. Saying, “Well, everyone else is doing it” is no excuse. You do remember your mother asking what you’d do if everyone else jumped off the Brooklyn Bridge, right?

Then there were the photos of three tumor-riddled rats, but … no photos of the control rats. There was the very unsciencey use of “GMO” in the captions of the pictures, which I will not reproduce here, because enough is enough. And then there was the experimental design, which was a statistical fishing expedition for false positives, something we’ll get into greater detail below.

Then there were the photos of three tumor-riddled rats, but … no photos of the control rats. There was the very unsciencey use of “GMO” in the captions of the pictures, which I will not reproduce here, because enough is enough. And then there was the experimental design, which was a statistical fishing expedition for false positives, something we’ll get into greater detail below.

Beat Späth, director for agricultural biotechnology at EuropaBio, has an excellent piece tallying the carnage left in Séralini’s wake in terms of the money wasted putting his fear-mongering propaganda posing as science to rest. He highlights the lives of rats needlessly wasted. Most consequentially, he details the needlessly precautionary regulations the EU saddled itself with in response to the agitprop photos of rat tumors in tumor-prone rats. He wrote:

Despite these findings, the damage has been far-reaching and pervasive with millions of citizens scared unjustifiably, €15 million of taxpayers’ money spent, and with thousands of rats having been needlessly used in feeding studies..Sadly, the needless use of rats still continues, because for every new GMO import authorisation, applicant companies are legally obliged to conduct 90-Day feeding studies. This is an obligation which came into force as a direct result of the Séralini affair. Furthermore, even though the legislation also foresees that the requirement for mandatory feeding studies should be reviewed in light of past research projects, the European Commission has so far refused to propose the abolishment of the legal requirement for rat testing, in direct contravention of the EU’s policies to reduce animal testing.

… For now, it seems, that despite everything, Séralini still wins.

Biological sciences student Sterling Ericsson reviewed the design and findings of the four feeding trials:

Four major research projects had been started by independent, though somewhat affiliated groups, on the subject of GM crops and any potential harms of them from long term consumption. Three of these are official EU-funded grant projects and, due to that, have no journal published paper, so we’ll discuss them last and in more fleeting detail. The main focus of this article will be on the study conducted by a massive team from multiple French universities and research labs involved in food science, toxicology, and even mathematics. That experiment is known as the GMO 90+ Project.

What I want to talk about is Séralini’s modus operandi and to draw out some patterns that journalists and other interested observers can use in order to be better equipped to turn a critical eye on Séralini and his ilk, because they never stop. It’s also worth noting that two frequent Séralini co-authors, Michael N. Antoniou and Robin Mesnage, also publish on their own, and those two names on a paper should be seen as red flags, calling for skepticism and scrutiny as well.

This suggests another tip for journalists that should be obvious but doesn’t seem to be practiced often enough: Google the authors. Try to get a sense of their reputations in the field. Most will be either well-respected or not well known. And then you occasionally will come across someone like Séralini who is a notorious and well-known mountebank.

Beware of statistical fishing expeditions

As a physiologist-blogger on Discover noted:

For each experimental condition, there were three different doses of either the GMO maize (as a percent of the diet), Roundup, or both; the amount of doses of Roundup were all well below the approved doses. The 20 groups each contained 10 individuals, for a full total of 200 rats (100 male and 100 female). While 10 rats per condition might seem low, in a power analysis used to detect differences in response to, say a Roundup and non-Roundup condition, this would probably be OK. But how many final comparisons were the authors making? In the end, the authors compared each experimental condition to the same group of control rats, something that could severely bias the results. In most well-performed experiments, there would be a separate group of control rats for each condition, the GMO food alone, the GMO + Roundup, and the Roundup alone. The controls used for the study, as Anthony Trewavas, a cell biologist at the University of Edinburgh, pointed out in a press release response, are “inadequate to make any deduction.”

Séralini was looking at too many variables given the size of his groups and their controls to make any statistically meaningful deduction. But it’s worse than that. You may remember a few years ago, John Bohannon, the molecular biologist and science publishing gadfly, made a bit of stir by conducting a chocolate feeding study that was purposely designed to result in some clickbait findings. In this case that wonderful news that eating lots of chocolate accelerated weight loss. Here’s what I said at the time on the Facebook website that I run, Food and Farm Discussion Lab:

I know what you’re thinking. The study did show accelerated weight loss in the chocolate group—shouldn’t we trust it? Isn’t that how science works?

Here’s a dirty little science secret: If you measure a large number of things about a small number of people, you are almost guaranteed to get a “statistically significant” result. Our study included 18 different measurements—weight, cholesterol, sodium, blood protein levels, sleep quality, well-being, etc.—from 15 people. (One subject was dropped.) That study design is a recipe for false positives.

Think of the measurements as lottery tickets. Each one has a small chance of paying off in the form of a “significant” result that we can spin a story around and sell to the media. The more tickets you buy, the more likely you are to win. We didn’t know exactly what would pan out—the headline could have been that chocolate improves sleep or lowers blood pressure—but we knew our chances of getting at least one “statistically significant” result were pretty good.

In order to get a ‘result’ from Sprague-Dawley rats at the 2-year mark, groups of 60 or larger are considered necessary. As noted above, Séralini used just 10 rats per group, and a single control rather than one control per group. Given the number of variables they were looking at, it was a near certainty that they would produce a dramatic false positive that could trumpeted to the press.

Hypothesis testing studies vs. hypothesis generating studies

There is nothing wrong with looking at a large number of variables in an experiment. However, it’s hard to adequately test a hypothesis when doing that. Scientists use the kinds of feeding trials Séralini was pretending to conduct as a means of generating a hypothesis. You start with some kind of feed, not knowing what effects it might have; you look at a wide range of things and try to see if something happens. The evidence you get might point to a correlation plausibly implies cause. But then you need to design an experiment to specifically test this new hypothesis.

One of the tools in Séralini’s toolbox is that he knows that health reporters are rarely scientifically sophisticated enough to distinguish between experiments that are meant to generate a hypothesis and those that are meant to test—or falsify—a hypothesis. This is evident in the number of in vitro experiments he conducts.

In in vitro experiments, one puts cells in a petri dish and exposes them to a substance to see what happens. Is a substance toxic to salamanders? Put some salamander cells in petri dish and squirt some pesticide on them. Nothing happens? It probably isn’t particularly toxic to salamanders. But what are you to conclude if it kills them? That actually doesn’t tell you that much because it’s easy to kill exposed cells in a petri dish with known toxins. In vitro studies are cheap and easy to conduct. They can tell you when you might be at a dead end or they might suggest that a larger, more robust animal feeding study might be necessary. But they don’t deliver anything conclusive, only suggestions about further experimentation.

So, when Séralini published ‘Glyphosate Formulations Induce Apoptosis and Necrosis in Human Umbilical, Embryonic, and Placental Cells’ in 2009 or ‘Cytotoxicity on human cells of Cry1Ab and Cry1Ac Bt insecticidal toxins alone or with a glyphosate-based herbicide’ in 2011, any scientist with even a modicum of credibility would cautiously conclude that “more research is warranted.” But most health reporters, not quite up to speed on the difference among various experiments, would likely report a version of “OMG! This stuff is probably poison!”

In 2014, when Séralini and his frequent ‘partners in crime’—Robin Mesnage, Nicolas Defarge, Joël Spiroux de Vendômois—published Major Pesticides Are More Toxic to Human Cells Than Their Declared Active Principles in the journal BioMed Research International the paper was so bad in its study design and over-determined conclusions it provoked eminent plant biotechnology professor Ralf Reski to resign from the editorial board of the journal.

Fun party trick: Do it wrong and count on nobody noticing

I’ve been part of discussions with scientists from the relevant fields nearly every paper Séralini has published going back a decade. I’ve never seen one where the experiment was done properly. In this essay, I’m trying to illustrate common red flags don’t require a masters degree in the relevant field to put to use. This one gets tricky, because as a lay person it’s hard to know if the statistical models were sound or if the proper assay was used. For journalists, all I can say is that if a paper using an assay produces an outlier or dramatic result, get an expert and ask if proper and relevant testing methods were used.

Here’s another example that a lay reader could easily miss, but sticks out like a sore thumb to an expert. University of California-Davis animal geneticist Alison Van Eenannaam:

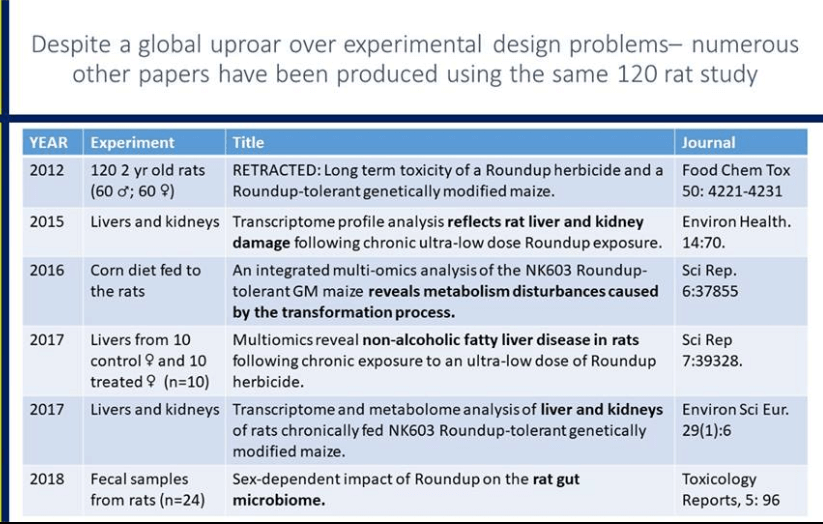

In what seems like a scene from the movie Groundhog Day, another rat study has come out of the laboratory of Dr. Giles-Eric Séralini, only in this case it is Roundup and not GMOs that are under fire. When I read the title of the paper, “Multiomics reveal non-alcoholic fatty liver disease in rats following chronic exposure to an ultra-low dose of Roundup herbicide”, I assumed a new study had been performed by the laboratory showing what this specific title appears to conclude i.e. that rats exposted to low levels of Roundup developed non-alcoholic fatty liver disease. However, when I read further I found that this was a study on tissues from a subset of the same lumpy rats that were involved in the famously retracted (and subsequently republished) paper from 2012 – the rats with horrific tumors (not fatty livers) due to GMOs (not glyphosate) that was breathlessly reported on the Doctor Oz show I participated in, and by media throughout the world.

I think if my work had been roundly criticized by scientific peers for poor experimental design and pathology data inadequacies, and critiqued by a multitude of separate national biosafety committees from Belgium, Brazil, European Union, Canada, France, Germany, Australia/New Zealand, and The High Council on Biotechnology, I would not double down and continue to analyze 5-year old samples from that same experiment. What is weird is that although I vividly remember the images of grotesque tumors on the white Sprague Dawley female rats, (one does not forget those images with a “GMO” label contrasted against the shocking tumors) I did not recall any mention of non-alcoholic fatty liver disease. So I went back to the original paper and searched for the term “fatty liver disease”. Nada.

Sometimes, a Séralini experimental design is so bad that even an English major could spot it. At the end of 2017, he published a paper purporting to show that expert wine tasters could taste pesticide residues in wine that was widely touted in low-brow anti-GMO sites run by cronies of the French scientist, but understandably ignored by wearied mainstream journalists. This paper elevates the strategy of “Do it wrong and hope that nobody notices” up two levels to “Publish gibberish and hope that nobody notices.” Professor of weed science Andrew Kniss:

Séralini apparently believes that these pesticides at these levels are dangerous. So it it baffling to me, that if he truly believes that these low doses are harmful, that he would knowingly ask 71 people to consume these pesticides, especially since the doses used in this study were “several thousand times above the admissible level in tap water (0.1 ppb).

Ethical questions surrounding this research aside, I looked at the methods and results out of morbid curiosity about what a pesticide tasting study would reveal. I work with pesticides a lot. Over the years, I can honestly say I’ve never even accidentally tasted any of them. I’ve certainly smelled many of them but I’ve never had the desire to taste them. Okay, maybe I’ve secretly had the desire to taste Harness herbicide, which for some odd reason both looks and smells like grape kool-aid to me. But even then I’ve never actually followed through and tasted it.

After reading this paper twice, though, I still have no idea what they could taste, or how accurate they were at tasting it, or anything else really. The inconsistencies and weird data reporting and incomprehensible metrics and unreported observations made it impossible to even critique the paper in any meaningful way. Some examples: 71 professionals were recruited for the study, but the results state that “[o]ut of 195 tests, 147 were judged by 36 professionals as demonstrating a marked difference between the wines of the pair.” So 36 could apparently taste differences in the wines (one with and one without pesticides), but what about the other 35? They couldn’t? That’s half of the participants. And half is exactly what one would expect to occur by chance.

It was surprising and comforting to note, despite being designed to produce maximum clickbait with minimal insight, the wine study didn’t get much, if any traction among health or environmental reporters. Perhaps with the end of the European ‘replication effort’, the Séralini pseudoscience syndicate will finally stop getting play in academic journals.

Marc Brazeau is the GLP’s senior contributing writer focusing on agricultural biotechnology. He also is the editor of Food and Farm Discussion Lab. Follow him on Twitter @eatcookwrite