Fourteen million people are diagnosed with a new cancer each year according to the World Health Organization. Before scientists began analyzing the DNA of tumors, most cancers were categorized and treated based on their location in the body using surgery, radiation and chemotherapy. But since the early 1980s, researchers have shifted focus discovering that the location of a cancer is not nearly as important as the genetic mutations that create it.

That discovery is the genesis of precision medicine: the idea that looking at a tumor’s specific genetic makeup creates treatment targets no matter where in the body it grows. And several drugs have been created that specifically treat cancers based on these mutations. The breast cancer drug Herceptin, for example, was developed to target a mutation in the HER2 gene, which causes rampant cancer growth in breast tissue. Herceptin also works in urinary tract tumors that share the HER2 mutation.

But there are lingering questions about precision medicine’s efficacy, especially given its costs. The lack of cohesion between the exponentially growing pile of genetic data and clinical health data may be impeding the future development of more of these much-needed targeted therapies. And there’s a chance we’ve been doing precision medicine all wrong.

Oncologist Victor Velculescu and his colleagues at Johns Hopkins found that half of the cancer mutations picked up in traditional tumor testing of about 800 patients were false positives. The carcinogenic mutations in these tumors were also in the DNA taken from patients’ normal, healthy cells. A treatment specific to these false positive mutations would give the patient all the side effects and costs of chemo, but with none of the tumor-suppressing benefits because the ‘tumor’ mutations caught by the test were not actually driving the cancer. They found and treated a false culprit.

Velculescu’s group found that genotyping normal healthy tissue alongside tumor tissue more precisely identifies the mutations that would be good, and effective treatment targets by comparing them to the mutations found in a person’s normal, healthy DNA. But that comes at a high price Velculescu told NPR:

You can imagine patients being placed on a particular therapy, with all the side effects of that therapy but without any of the benefits…You can imagine that it prevents the patient from getting the right therapy. And then, finally, there are the additional costs of having therapies that aren’t really useful in any way.

But double testing means double the cost, which health insurance companies are unlikely to cover. If the cost reduction from eliminating treatments based on false-positives were great enough, that may change.

One hurdle to cost management is that data from a patient’s genomic testing doesn’t sync well with data from medical records. This is a big problem in almost all facets of healthcare information technology: A patient’s health record will document that an x-ray was taken and what the x-ray showed but will not link to the actual image data. Genomics faces the same problem explains Mark Rubin in Nature:

Yet mountains of genomic data are accumulating that are of little use because they are not tied to clinical information, such as family medical history. What is more, genomic data are generally confined to documents that cannot easily be searched, shared or even understood by most physicians.

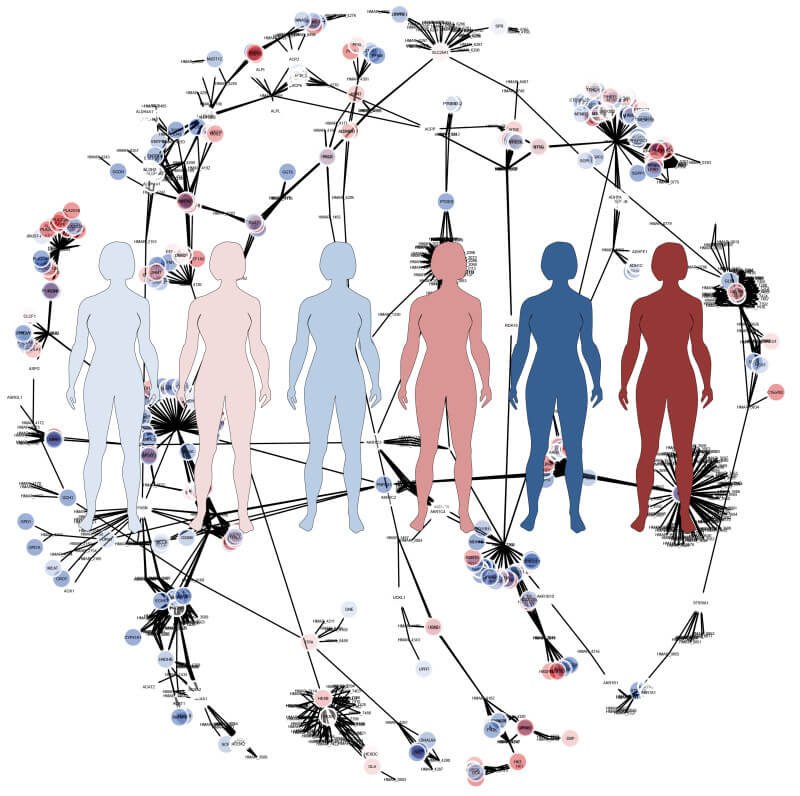

Because cancer-causing mutations are fairly rare, the search for new drug targets will require data be searched and analyzed across millions of genomes housed in the data centers of many different medical centers, something that now must be done through a very tedious data sharing process, Rubin says. There may be hundreds of yet-to-be-discovered cancer mutations hiding in data we’ve already collected but are simply unable to analyze.

Several companies are working on this problem including NantHealth and 23andMe. Both groups are trying to find meaningful ways to harness genetic information alongside health history. 23andMe, for example, asks its customers if they’d like to participate in research driven by the genetic analysis they paid for and their responses to online questionnaires. The company has launched a research portal that lets researchers outside the company access this information and lists the publications on which it has collaborated.

But a handful of companies are unlikely to solve the communication and access program on its own. That’s part of the reason many researchers were so pleased with President Barack Obama’s announcement to fund precision medicine to the tune of $215 million early this year. Only $5 million goes towards development of standardized reporting and ‘interoperability’ of data. Even if the initiative’s goal of genotyping one million Americans is met, will the data infrastructure be enough to capitalize on the data?

Although precision medicine has largely focused on cancers and their treatments, the theoretical promise is that many diseases follow this same pattern. Eventually the treatment of asthma, allergies, diabetes and heart disease, as a few examples, could all be tailored to the genetic risk factors that caused a patient to develop said disease, writes David Shaywitz at Forbes.

I suspect that the lack of examples reflects the infancy of the field, rather than the fragility of the thesis… But, if only as a thought exercise, it’s worth at least considering what it would mean if the careful study of phenotype, and the resulting integration with genetic data doesn’t turn out to be as useful as we presently anticipate – what if the expected clinically informative, biologically-based subgroups don’t emerge?

Given the billions spent on advancing human health genetics so far that is indeed a very provocative, if unlikely, question.

Meredith Knight is contributor to the human genetics section for Genetic Literacy Project and a freelance science and health writer in Austin, Texas. Follow her @meremereknight.

Additional Resources:

- That ‘Precision Medicine’ initiative? A Reality Check, Genetic Literacy Project

- What is ‘precision medicine’?, Genetic Literacy Project

- Without accurate genome sequencing, personalized medicine is a goner, Genetic Literacy Project