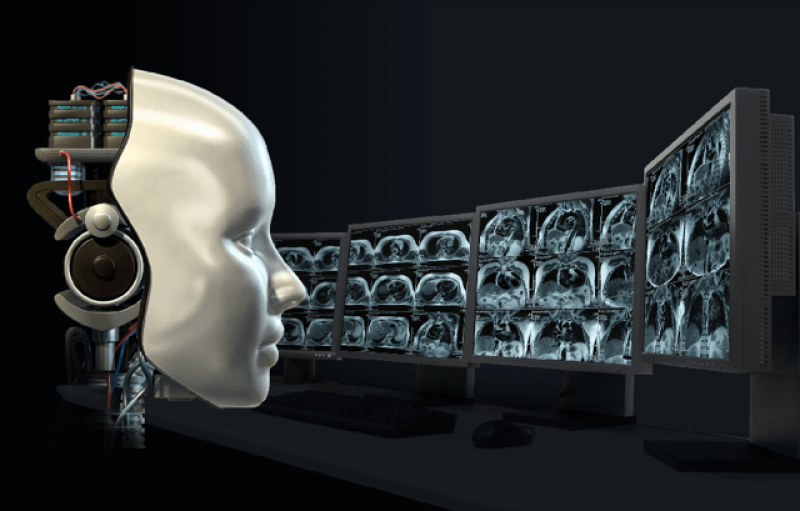

Today, hundreds of startup companies around the world are trying to apply deep learning to radiology. Yet the number of radiologists who have been replaced by AI is approximately zero.

…

Deep-learning systems excel at finding associations within the training data, but have no ability to differentiate what is causally relevant from what is accidentally correlated, like fuzz on an imaging device. Spurious associations can wind up being heavily over-weighted.

In diagnosing skin cancer from images, for example, a dermatologist might use a ruler to size a lesion only if he or she suspects it is cancerous. In this way, the presence of a ruler becomes associated with a cancer diagnosis in the image data. An AI algorithm may well leverage this association, instead of the visual appearance of the lesion, to make cancer decisions. But rulers aren’t actually causing cancer, meaning the system can easily be misled.

…

Genuine advances will require a sustained research effort into reworking the fundamentals of AI as we know it. The long-promised AI revolution may someday arrive, but for patient safety, it is important we be leery of premature declarations of victory.

Read full, original post: Advancing AI in health care: It’s all about trust