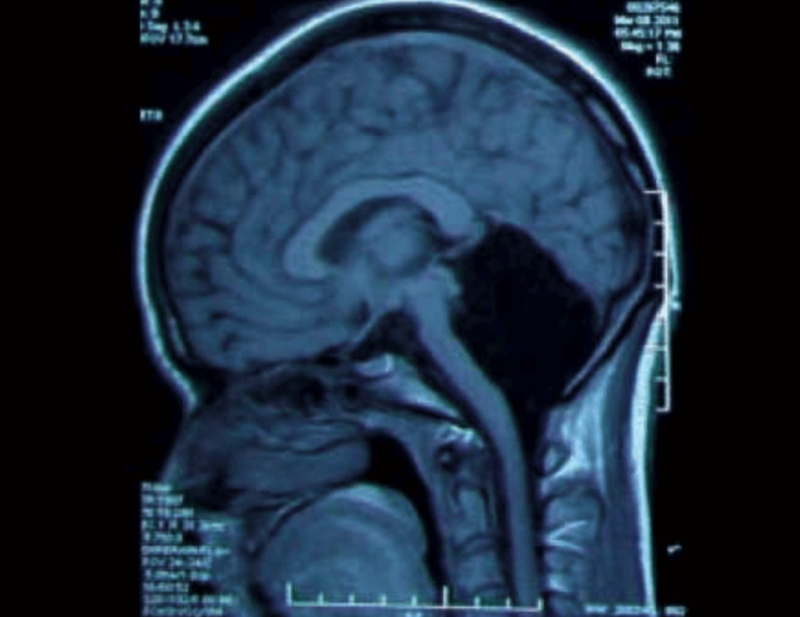

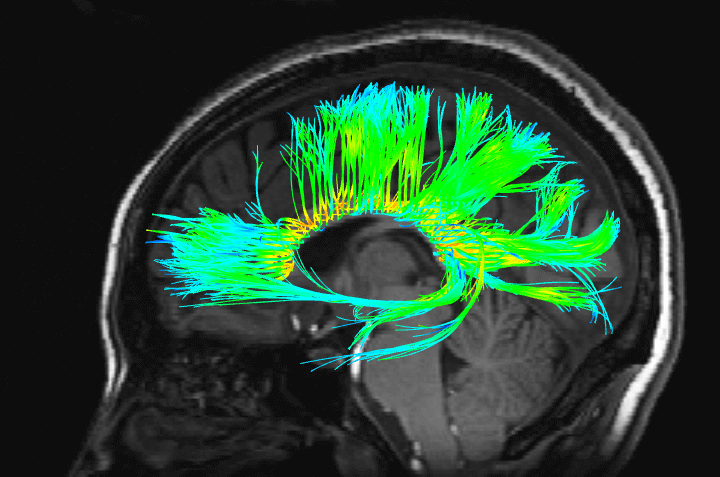

Yes, most of the players on the brain’s ‘stage’ were present: the cerebral cortex, the largest, outermost part of the brain responsible for most of our thinking and cognition, was present and accounted for; the subcortex and the midbrain, with their myriad functions involving movement, memory and body regulation – also present; the brainstem, essential for controlling breathing, sleep and communicating with the rest of the body – present and accounted for.

But none of these arenas hold the majority of the brain’s currency – neurons, the cells that fire impulses to transmit information or relay motor commands. This distinction goes to the cerebellum, a structure situated behind the brainstem and below the cerebral cortex. Latin for ‘little brain’, the highly compact cerebellum occupies only 10 per cent of the brain’s volume, yet contains somewhere between 50 and 80 per cent of the brain’s neurons. And indeed, it was in this sense that the hospitalised Chinese woman was missing the majority of her brain.

Incredibly, she had made it through nearly two and a half decades of life without knowing her cerebellum was missing. Compare that with strokes and lesions of the cerebral cortex, whose neuron-count is a fraction of the cerebellum’s. These patients can lose the ability to recognise colours or faces and to comprehend language – or they might develop what’s known as a ‘disorder of consciousness’, a condition resulting in loss of responsiveness or any conscious awareness at all.

Why do neurons in our brain feel like an experience

Understanding consciousness might be the greatest scientific challenge of our time. How can physical stuff, eg electrical impulses, explain mental stuff, eg dreams or the sense of self? Why does a network of neurons in our brain feel like an experience, when a network of computers or a network of people doesn’t feel like anything, as far as we know?

Alas, this problem feels impossible. And yet, an unmet need for progress in disorders of consciousness, in which the misdiagnosis rate is between 9 and 40 per cent, demands that we try harder. Without trying harder, we’ll never know if injured patients are truly unconscious – or unresponsive but covertly conscious with a true inner life. Without this knowledge, how can doctors know whether a patient is likely to recover or whether it’s ethical to withdraw care?

Many covertly conscious patients differ from those we know from popular culture, such as the French journalist Jean-Dominique Bauby. After suffering a stroke that damaged his brainstem, Bauby was left unable to move voluntarily except blinking his left eyelid. From his hospital bed, Bauby used eyeblinks to dictate a memoir of his harrowing experience, The Diving Bell and the Butterfly (1997). In Bauby’s case – referred to as locked-in syndrome – loss of voluntary control was caused by damage to the brainstem, which is essential for controlling the rest of the body and thus communicating with the outside world.

Covert consciousness includes not only locked-in syndrome, but also patients with damage to the cerebral cortex. In that instance, covert consciousness is harder to identify because the mental abilities these patients retain are likely impaired. For example, such a patient might be unresponsive not because she is unconscious, but because a lesion to her cerebral cortex has taken away her ability to understand spoken language.

And, unlike Bauby, an MRI image of these patients’ brains shows widespread cerebral damage that spells uncertainty to a neurologist trying to determine if anybody’s home. Even after such patients open their eyes and awaken from a coma, a total lack of responsiveness or voluntary movement often follows, garnering a diagnosis of the vegetative state, also known as unresponsive wakefulness syndrome.

Detecting consciousness

To detect covert consciousness in patients diagnosed with disorders of consciousness, an international team of researchers, including my current supervisor, Martin Monti, have used a clever task that exploits the mental imagery that some otherwise unresponsive patients can generate on command. The team wheeled 54 patients – ranging from those who were inconsistently responsive to others who where outright unresponsive – inside a brain scanner. There, the team imaged their brain function using functional MRI (fMRI) in order to deduce what fraction, if any, might be covertly conscious. ‘In a small minority of cases, we can use MRI to detect some awareness in patients who would otherwise seem unconscious,’ Monti said.

Monti and colleagues first asked seemingly unconscious patients to imagine walking through their homes. ‘We saw a flicker of fMRI activity in the parahippocampal gyrus in all but one participant,’ said Adrian Owen, another researcher involved with the project. But asking people to imagine walking through their homes wasn’t enough. To increase their confidence that the person in the scanner was awake and following instructions, the researchers also wanted a second task that would show a different pattern of activation. Finally, one of Monti and Owen’s colleagues, Melanie Boly, mentioned that, according to the research, complex tasks might work better than simple ones. ‘What about tennis?’ Owen responded.

Much to the researchers’ delight, asking healthy participants to imagine playing tennis yielded a clear and consistent signature of brain activation. Would the same task work in covertly conscious patients? Once inside the MRI machine, researchers asked unresponsive patients to imagine one of two tasks, playing tennis or walking around their house. Exactly how many patients would be responsive was anyone’s guess, Monti told me. But with the first swing of the racket, so to speak, the team found an otherwise unresponsive patient who appeared to understand the tennis task. The patient fulfilled all the criteria for a vegetative state diagnosis but was, in fact, conscious.

The resulting study, eventually published in 2010, was simultaneously hopeful and sobering: five out of 54 patients placed in the scanner were able to generate mental imagery on demand, evidence of minds that could think, feel and understand, but not communicate. Or could they? What if patients could use the two tasks to respond to questions, answering ‘yes’ through internal imagery itself.

In short, ‘yes’ could be communicated by imagining tennis and ‘no’ by imagining walking around their house. Once again, the team found success on their very first attempt. Upon asking a patient several questions such as ‘Is your father’s name Thomas?’, the researchers received appropriate responses indicated by the signature image of each task, registered in the MRI. ‘Turns out, even patients who appear [to be] in a coma can have more cognitive function than can be observed with standard clinical methods,’ Monti said.

What anesthesia reveals

Following in the footsteps of Monti and his colleagues, a study published in 2018 by a different group of researchers from the University of Michigan used a similar fMRI mental-imagery task to show covert consciousness where no one wants to find it: anaesthesia. Alarmingly, one out of the five healthy volunteers who had undergone general anaesthesia for the sake of science using the drug propofol was able to do what shouldn’t be possible: generate mental imagery upon request in the scanner. The implications are clear: we aren’t necessarily blissfully unconscious when the surgeon puts us under.

The fMRI tennis task has shown that under the veils of general anaesthesia and the vegetative state, consciousness occasionally lurks. But the task’s efficacy depends on patients being able to hear questions and understand spoken language, an assumption that is not always true in brain-injured individuals with cerebral lesions.

Consciousness can also occur without language comprehension or hearing. In their absence, a patient might still experience pain, boredom or even silent dreams. Indeed, if only certain regions of the cerebral cortex were lesioned, vivid conscious experiences might persist while the patient remains unable to hear the questions asked by Monti’s team. Because of this, MRI scans might miss many people who are conscious, after all. Rather than depending on mental imagery that must be wilfully generated by brain-injured patients who can hear and understand language, an alternative marker of consciousness – a superior consciousness detector – is needed to shine a piercing light in the dark.

Consciousness is a mystery. A multitude of scientific theories attempt to explain why our brains experience the world, rather than simply receiving input and producing output without feeling. Some of those theories are ‘out there’ – for instance, the framework developed by the British theoretical physicist Sir Rodger Penrose and the American anaesthesiologist Stuart Hameroff.

Penrose and Hameroff link consciousness to microtubules, the filament-like structures that help form the skeletons of neurons and other cells. Inside microtubules, electrons can jump between different compartments. In fact, according to the rules that govern our universe at the level smaller than atoms, these electrons can exist in two compartments simultaneously, a state known as quantum superposition. Consciousness enters the picture largely due to an interpretation of quantum physics: the claim that a conscious observer is needed for a particle such as an electron to have a definite location in space, thus ending the superposition. As Hameroff said in an interview for the PBS series Closer to Truth:

You have a superposition of possibilities that collapse to one or the other, and when that happens there’s a moment of subjectivity. This seemed like a stretch and still does to many people, but as Sherlock Holmes said: ‘If you eliminate the impossible, whatever’s left, no matter how seemingly improbable, must be correct.’

This makes sense to Hameroff but not to me. Following that Holmesian adage to ‘eliminate the impossible’, I reject the conflation of quantum spookiness with consciousness. For one, the elaborate theory of consciousness developed by Penrose and Hameroff requires a new physics – quantum gravity – that hasn’t been developed yet. But more than that, Penrose and Hameroff’s framework fails to explain why the cerebellum is not involved in consciousness. Cerebellar neurons have microtubules too. So why can the cerebellum be lost or lesioned without affecting the conscious mind?

What underlies conscious experience?

An approach that I find more promising comes from the neuroscientist Giulio Tononi at the University of Wisconsin. Rather than asking what brain processes or brain structures are involved in consciousness, Tononi approaches the question from the other direction, asking what essential features underly conscious experience itself.

For context, compare his approach to another big question: what is life? Living things pass traits to their offspring – so there must be genetic information passed from parent to child (or calf, or seedling). But living things also evolve and adapt to their environment – so this genetic information must be malleable, changing from generation to generation.

By approaching the problem from this bottom-up angle, you might have predicted the existence of a complex molecule such as DNA, which stores genetic information but also mutates, allowing for evolution by natural selection. In fact, the physicist Erwin Schrödinger nearly predicted DNA in his book What is Life? (1944) by viewing the problem from this direction. The opposite approach – looking at lots of living things and asking what they have in common – might not show you DNA unless you have an enormously powerful microscope.

Just as life stumped biologists 100 years ago, consciousness stumps neuroscientists today. It’s far from obvious why some brain regions are essential for consciousness and others are not. So Tononi’s approach instead considers the essential features of a conscious experience. When we have an experience, what defines it? First, each conscious experience is specific. Your experience of the colour blue is what it is, in part, because blue is not yellow. If you had never seen any colour other than blue, you would most likely have no concept or experience of colour. Likewise, if all food tasted exactly the same, taste experiences would have no meaning, and vanish. This requirement that each conscious experience must be specific is known as differentiation.

But, at the same time, consciousness is integrated. This means that, although objects in consciousness have different qualities, we never experience each quality separately. When you see a basketball whiz towards you, its colour, shape and motion are bound together into a coherent whole. During a game, you’re never aware of the ball’s orange colour independently of its round shape or its fast motion. By the same token, you don’t have separate experiences of your right and your left visual fields – they are interdependent as a whole visual scene.

Tononi identified differentiation and integration as two essential features of consciousness. And so, just as the essential features of life might lead a scientist to infer the existence of DNA, the essential features of consciousness led Tononi to infer the physical properties of a conscious system.

Consciousness detectors

Future engineers of consciousness-detectors, pay careful attention: these properties are exactly what such a fantastic machine should look for in the brains of unresponsive patients. Because consciousness is specific, a physical system such as the brain must select from a large repertoire of possible states to be conscious. As with the connection between life and DNA, this inference depends crucially on the concept of information.

Experiences are informative because they rule out other experiences: the taste of chocolate is not the taste of salt, and the smell of roses is not the smell of garbage. Because these experiences are informative and the brain is identified with consciousness, we infer that, as one’s consciousness increases, so too does information in the brain. In fact, when the brain is packed full of information, its repertoire of possible states increases.

This is like a game of hangman. First, consider playing hangman in English. The English alphabet contains 26 letters, and each letter correctly guessed is moderately informative of the word being revealed. Common letters such as ‘e’ are less informative, and rare letters such as ‘x’ are more informative (after all, how many English words are spelled with ‘x’?) But imagine playing hangman with Chinese script, which contains thousands of characters. Each character is highly informative because it occurs less frequently than almost any letter in English, like a rare beacon signalling a special occasion. And so, because Chinese script has a larger repertoire of possible characters, the entire game of hangman can be won by guessing a single character.

So it is too with the brain: when the repertoire of possible brain states is larger, its informational content increases, as does its capacity for highly differentiated conscious experiences. But at the same time, consciousness depends on integration: neurons must communicate and share information, otherwise the qualities contained in a conscious experience are no longer bound together. This simultaneous requirement for both differentiation and integration might feel like a paradox.

Here, a metaphor borrowed from Tononi offers clarity: the conscious brain is like a democratic society. Everyone is free to cast a different vote (differentiation) and talk to one another freely (integration). The unconscious brain, on the other hand, is more like a totalitarian society. Citizens might be forbidden to talk freely to one another (a lack of integration), or they might be forced to all vote the same way (a lack of differentiation).

Just as both differentiation and integration are necessary for democracy, they’re also necessary for consciousness. This is not merely an armchair musing: Tononi’s ideas are based on clinical observations. Among the most compelling of these data are reports of patients, such as the anonymous Chinese woman, who lack a cerebellum yet retain consciousness. The cerebellum, it turns out, is a totalitarian society. Its neurons, though they are many, don’t freely communicate with one another. Instead, cerebellar neurons are organised in chains: each neuron sends a message to the next neuron down the chain, but there is little communication between chains, nor is there feedback going the other direction down the chain.

Metaphors for the brain

To visualise this communication style, you might imagine many people standing in a line, each tapping the next person on the shoulder. Thus, while the cerebellum contains the majority of neurons in the brain, its neurons are like citizens in a society with little to no integration. Without integration, there is no consciousness. The cerebral cortex, on the other hand, is a free a society. To visualise its communication style, imagine a community of people freely interacting, not only with their neighbours, but also with more distant people across town.

Of course, consciousness is not always present in the cerebral cortex. At night, during a dreamless sleep, differentiation is lost. Large populations of neurons are forced into agreement, firing together in the same pattern. Researchers eavesdropping with EEG (a technology that records electrical brain activity from the scalp) can hear these neurons chanting together like a monolithic crowd in a sports arena. The same loss of differentiation occurs during a generalised epileptic seizure, when large populations of neurons all fire together due to a runaway storm of excitation. As neurons lock into agreement, consciousness vanishes from the mind.

Tononi’s theory that both differentiation and integration are required for consciousness is known as integrated information theory (IIT). Using IIT, one can systematically predict which brain regions are involved in consciousness (the cerebral cortex) and which are not (the cerebellum). In the clinic, ideas derived from IIT are already helping researchers infer which brain-injured patients are conscious and which are not. Based on what IIT says that a conscious system should look like, researchers can infer what kind of response a conscious brain should give following a pulse of energy – a consciousness-detector far more probing than thinking of tennis in an MRI scan.

As Monti is fond of saying, it’s like knocking on wood to infer its density from the resulting sound. In this case, the ‘knocking’ is delivered using a coil of wire to create a magnetic pulse, a technique called transcranial magnetic stimulation (TMS). Researchers then use EEG to listen for the ‘echo’ of this magnetic perturbation, discovering what kind of ‘society’ this particular brain really is.

If the response is highly complex, integrated and differentiated, then we are dealing with a pluralistic society; different brain regions respond in different ways; the patient is probably conscious. But, if the response is uniform, appearing the same everywhere, then we are dealing with a totalitarian society, and the patient is probably unconscious.

The above approach – the best version yet of a consciousness-detector – was introduced by an international team of researchers lead by the neuroscientist Marcello Massimini at the University of Milan in 2013. Formally known as the perturbational complexity index, the technique is sometimes referred to as ‘zap-and-zip’ because it involves first zapping the brain with a magnetic pulse and then looking at how difficult the EEG response is to compress, or zip, as a measure of its complexity.

Researchers have already used zap-and-zip to determine whether an individual is awake, deeply sleeping, under anaesthesia, or in a disorder of consciousness such as the vegetative state. Soon, the approach could tell us which unresponsive, brain-injured patients (not to mention patients anaesthetised for surgery) are covertly conscious: still feeling and experiencing, despite an inability to communicate. Indeed, this is the closest science has ever come to ‘quantifying the unquantifiable’, as the achievement is described in the journal Science Translational Medicine.

But more mysteries of consciousness remain. In the Monti Lab at the University of California, Los Angeles, I’m currently investigating why children with a rare genetic disorder called Angelman syndrome display electrical brain activity that lacks differentiation even when the kids are awake and experiencing the world around them.

There’s no question that these children are conscious, as one clearly sees from watching their rich spectrum of purposeful behaviour. And yet, placing an EEG cap on the head of a child with Angelman syndrome reveals Tononi’s metaphorical totalitarian society – neurons that appear to be locked into agreement.

Some brain activities not linked to consciousness

By showing us what types of brain activity are and aren’t essential for consciousness, patients with Angelman syndrome could offer insights into consciousness similar to those offered by patients lacking part or all of the cerebellum. My recent work in this area shows that, despite the loud chanting revealed by the EEG in Angelman syndrome, neurons nonetheless change their behaviour when these children sleep: they chant still louder, and their chanting is less rich and diverse. I’m optimistic that when someone finally does measure the neural echo through zap-and-zip on children with Angelman syndrome, it will confirm that zap-and-zip is sensitive enough to tell consciousness from dreamless sleep. If not, it will be back to the drawing board for the consciousness-detector.

Consciousness might be the last frontier of science. If IIT continues to guide us in the right direction, we’ll develop better methods of diagnosing disorders of consciousness. One day, we might even be able to turn to artificial intelligences – potential minds unlike our own – and assess whether or not they are conscious. This isn’t science fiction: many serious thinkers – including the late physicist Stephen Hawking, the technology entrepreneur Elon Musk, the computer scientist Stuart Russell at the University of California, Berkeley and the philosopher Nick Bostrom at the Future of Humanity Institute in Oxford – take recent advances in AI seriously, and are deeply concerned about the existential risk that could be posed by human- or superhuman-level AI in the future. When is unplugging an AI ethical? Whoever pulls the plug on the super AI of coming decades will want to know, however urgent their actions, whether there truly is an artificial mind slipping into darkness or just a complicated digital computer making sounds that mimic fear.

While this exact challenge has yet to confront us, scientists and philosophers are already struggling to understand a recent development of growing miniature brain-like organs in culture dishes outside of bodies. Currently, these ‘mini-brains’ are helping biomedical researchers understand diseases affecting the brain, but what if they eventually develop consciousness – and the capacity to suffer – as biological engineering grows more sophisticated in years to come? As the challenges of mini-brains and AI both make clear, the study of consciousness has shed its esoteric roots and is no longer a mere pastime of the ivory tower. Understanding consciousness really matters – after all, the wellbeing of conscious minds depends on it.

Joel Frohlich is a postdoctoral researcher studying consciousness in the laboratory of Martin Monti at the University of California, Los Angeles. He is also a content producer at Knowing Neurons. Joel can be found on Twitter @joel_frohlich

A version of this article was originally posted at Aeon and has been reposted here with permission. Aeon can be found on Twitter @aeonmag