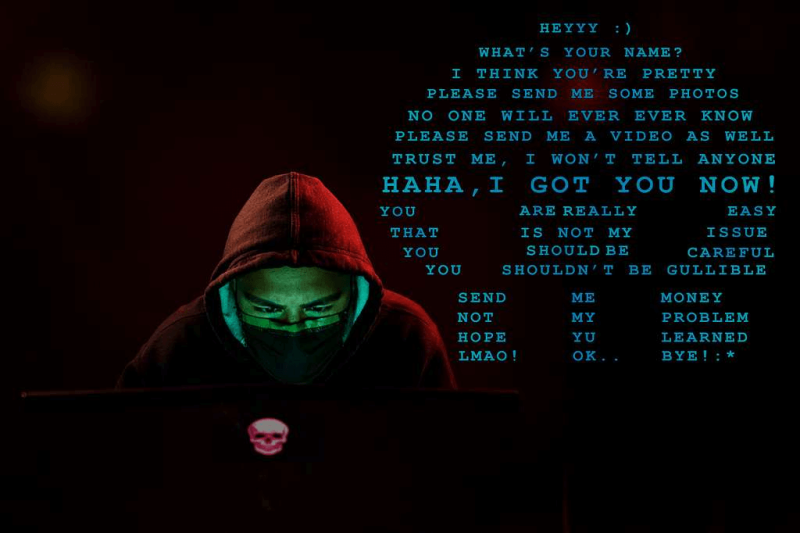

Innocent beach pictures or men’s bare-chested gym pictures can be twisted into sexually explicit, AI-generated “deepfakes” that are weaponized against panicked and embarrassed teens and preteens.

Cases have resulted in an alarming number of suicides by victims, experts said, but AI-powered tech can detect anything it considers “sexually explicit” and block it from ever seeing the light of day.

Yaron Litwin, executive of Canopy, developed AI software that blocks these types of images – even innocent bathing suit pictures from the beach – from ever being sent out and alerts the parents.

“Our technology is an AI platform that has been developed over 14 years … and can recognize images with video and an image in split seconds,” Litwin told Fox News Digital.

“The platform itself will also filter out in real time any explicit image as you’re browsing through a website or an app and really prevent a lot of pornography from appearing online.”

It’s essentially an extra layer of protection that stops naive children and teens from sharing even innocent pictures or videos that criminals can use against them.

“We like to call it AI for good,” Litwin said. “It’s a way to protect our kids.”