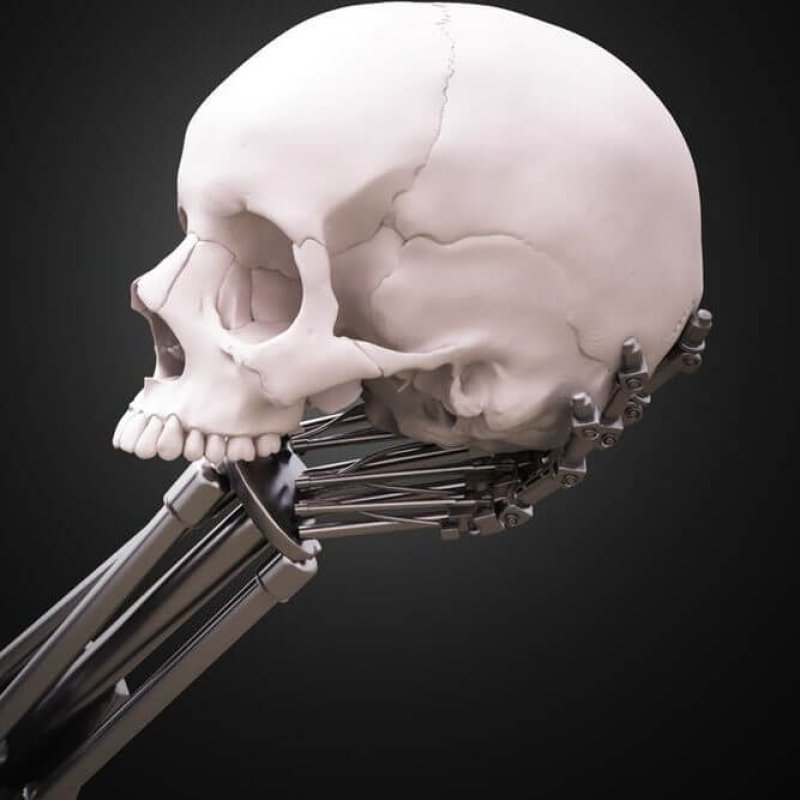

If there’s nothing magical about our brains or essential about the carbon atoms that make them up, then we can imagine eventually building machines that possess all the same cognitive abilities we do.

…

Human intelligence has been optimized to deal with specific constraints, like passing the head through the birth canal and calorie conservation, whereas artificial intelligence will operate under different constraints that are likely to allow for much larger and faster minds.

…

AI systems are likely to lack human motivations such as aggression, but they are also likely to lack the human motivations of empathy, fairness, and respect. Their decision criteria will simply be whatever goals we design them to have; and if we misspecify these goals even in small ways, then it is likely that the resultant goals will not only diverge from our own, but actively conflict with them.

…

To avoid inadvertently building a powerful adversary, and to leverage the many potential benefits of AI for the common good, we will need to find some way to constrain AGI to pursue limited goals or to employ limited resources; or we will need to find extremely reliable ways to instill AGI systems with our goals.

The GLP aggregated and excerpted this blog/article to reflect the diversity of news, opinion, and analysis. Read full, original post: Why we should be concerned about artificial superintelligence